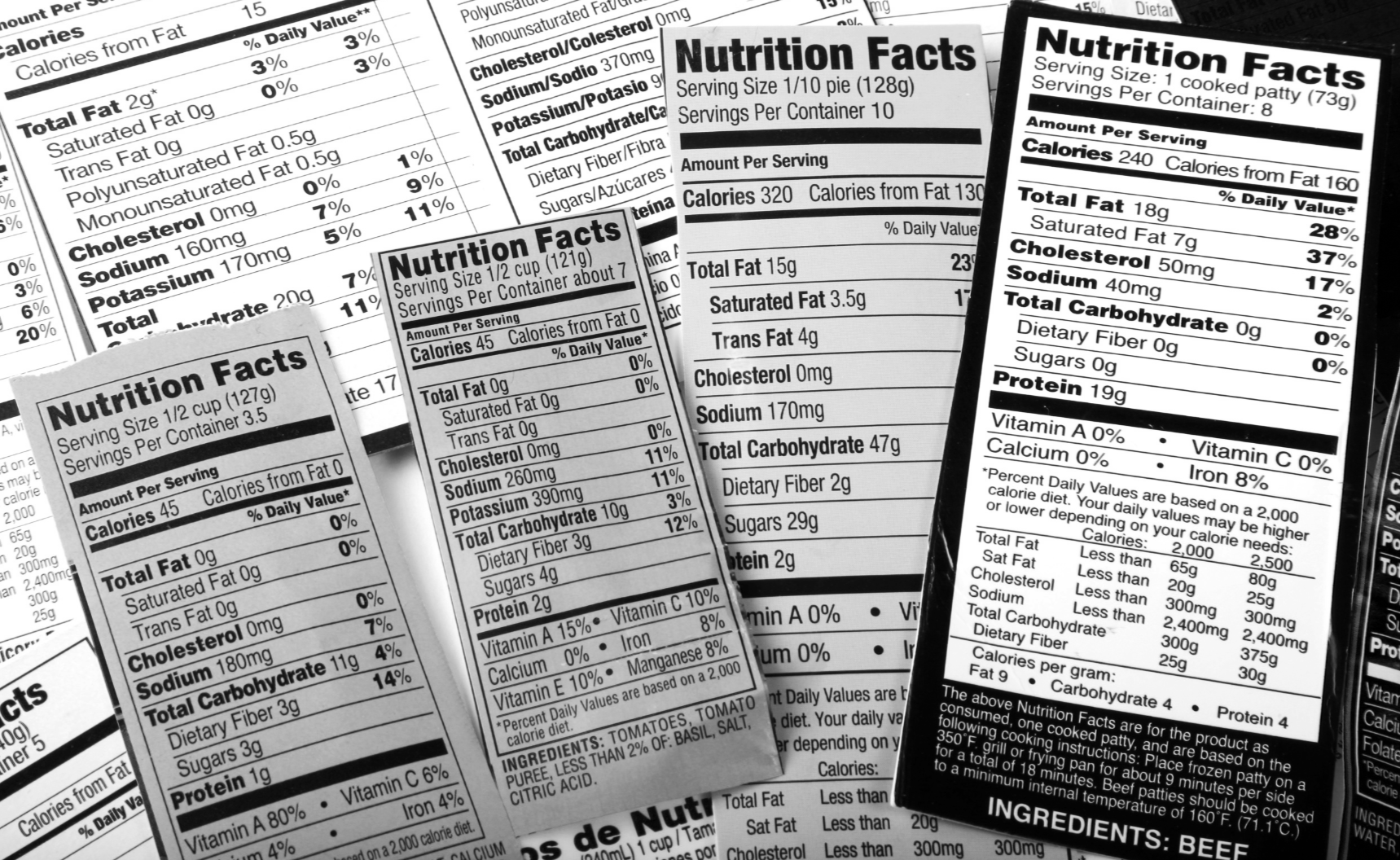

Flip the bottle over.

The good stuff is on the back.

Is AI safe to use?

Here's the honest answer.

When you're really into sauce, the temptation is to just pour it on everything without checking what's actually in the bottle first.

We've all done it. No judgment.

But there are a few things worth knowing before you pour freely. Not because AI is out to get you. Not because your data is being harvested by robots in a basement somewhere. But because a little bit of label reading goes a long way and most of it takes about sixty seconds.

Think about how you'd behave at a café with a friend. You're having a conversation, there are people around, and you wouldn't dream of reading out your credit card number, sharing confidential work documents, handing over your passwords, or telling them your home address and when you'll next be away.

AI is the friend. The internet is the café. Act accordingly.

That's genuinely most of it. The rest is just reading the label.

What information should you never share with an AI tool?

Read before you squeeze.

Every AI tool has a privacy policy and terms of service. Nobody reads them. We know. But there are a few things worth understanding before you pour freely:

Where does your data go?

Most free consumer AI tools (think: your tomato sauces) use your conversations to improve their models unless you tell them not to. That means what you type can become training data for future versions of the AI. It's not sinister. But it's worth knowing and worth adjusting in your settings if it bothers you.

Can you turn it off?

Yes, usually. ChatGPT, Claude, and Gemini all have privacy settings that let you control whether your conversations are used for training. It takes about thirty seconds to find and another thirty to change. We'd recommend having a look.

What about work stuff?

This is the big one people miss. Do not paste confidential work documents, client information, financial data, or anything your employer would have strong feelings about into a free public AI tool. Your workplace may have specific policies about this. If you're not sure, check before you paste. The café analogy applies here more than anywhere: you wouldn't read a confidential document out loud at your local. Same rules.

Photos and images.

Think about what's in the background of anything you upload. Documents on your desk. Addresses on envelopes. Other people's faces. AI tools can see everything in an image, not just the thing you're pointing at.

Free vs paid.

Free tiers of AI tools generally have broader data collection than paid tiers or enterprise versions. If you're using AI for sensitive work it's worth understanding which version you're on and what that means for your data.

The one thing worth doing today.

Go into the settings of whichever AI tool you use most and have a look at the privacy controls. You don't need to understand all of it. Just have a look at what's there and make the choices that feel right for you.

Links to privacy settings for the main tomato sauces:

And remember, if the sauce doesn't feel right, don't eat it.

Seriously. If a tool makes you uncomfortable, don't use it. There are enough options on the shelf that you never have to use something that doesn't sit right with you. Your comfort comes first. Always.

Now flip that bottle back over and get cooking.

FAQs

Frequently Asked Questions. From real people.

We speak to a lot of people about sauce, and they tell us that this AI stuff can feel a bit like reading the back of a sauce bottle in a foreign language. We get it.

So here are real questions from real people who just want to know what’s actually in the bottle. No jargon, no spin, just straight answers.

And if you come across a word you don't recognise, we've got a glossary for that too.

Is it safe to use AI tools like ChatGPT and Claude?

Yes, with common sense. Treat them like a conversation in a public café: don't share passwords, confidential work documents, financial data, or personal information you wouldn't say out loud in front of strangers. The tools themselves are not out to get you. Just apply the same judgment you'd use anywhere online.

Does AI save my conversations?

Most free AI tools do save your conversations and may use them to improve their models. You can turn this off in the privacy settings of most major tools including ChatGPT, Claude, and Gemini. It takes about sixty seconds and is worth doing.

Can my boss see what I type into AI tools?

If you're using a free consumer version of an AI tool on your personal device, your employer generally cannot see your conversations. However if your workplace provides AI tools or has enterprise agreements, different rules may apply. When in doubt, check your workplace policy before pasting anything work related.

What should I never put into an AI tool?

Passwords, financial account details, confidential client information, sensitive work documents, home addresses, and anything your employer would have strong feelings about. When in doubt, leave it out.

Is the free version of AI tools less private than paid?

Generally yes. Free tiers typically have broader data collection than paid or enterprise versions. If you're using AI for sensitive work it's worth understanding which version you're on and what that means for your data.

Which AI tool is the most private?

All major AI tools have privacy settings worth checking. Claude, ChatGPT, and Gemini all allow you to opt out of having your conversations used for training. No tool is completely private but all of them give you meaningful control if you look for it.

What is a large language model (LLM)?

It's the technical name for the type of AI behind tools like ChatGPT, Claude, and Gemini. A large language model has been trained on an enormous amount of text (books, articles, websites, conversations) and learned to understand and generate language from it. The name sounds complicated. The experience is just a conversation.

What is AI literacy?

It's the ability to understand, use, and think clearly about AI tools. Not coding. Not a certificate. Just knowing what AI can and can't do, how to get useful results from it, and how to look after yourself while you're using it. Which is exactly what TSC is here for.

Can AI be trusted?

Mostly yes, with the same common sense you'd apply anywhere online. AI tools are genuinely useful and the major ones are built by companies with serious privacy and security obligations. That said, they're not infallible. They can get things wrong, fill in gaps with confident-sounding nonsense, and occasionally misread what you're asking. The trick isn't blind trust or blanket suspicion. It's knowing when to verify what comes back. Think of it like a very knowledgeable friend who occasionally makes things up. Useful, but worth a second opinion on the big stuff.

If I download ChatGPT on my phone, can it see everything?

Short answer: no. ChatGPT can only see what you actively type or paste into it. It doesn't have access to your photos, emails, messages, contacts, or anything else on your phone unless you specifically share it in the conversation. It's not sitting in the background having a snoop around. You're in control of what goes in. The same common sense applies as anywhere online though. Don't share passwords, financial details, or anything you wouldn't say out loud in a café.

Got a question we haven't answered? Send it to hello@thesaucecollective.com.auand we'll add it to the list.